1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

244

245

246

247

248

249

250

|

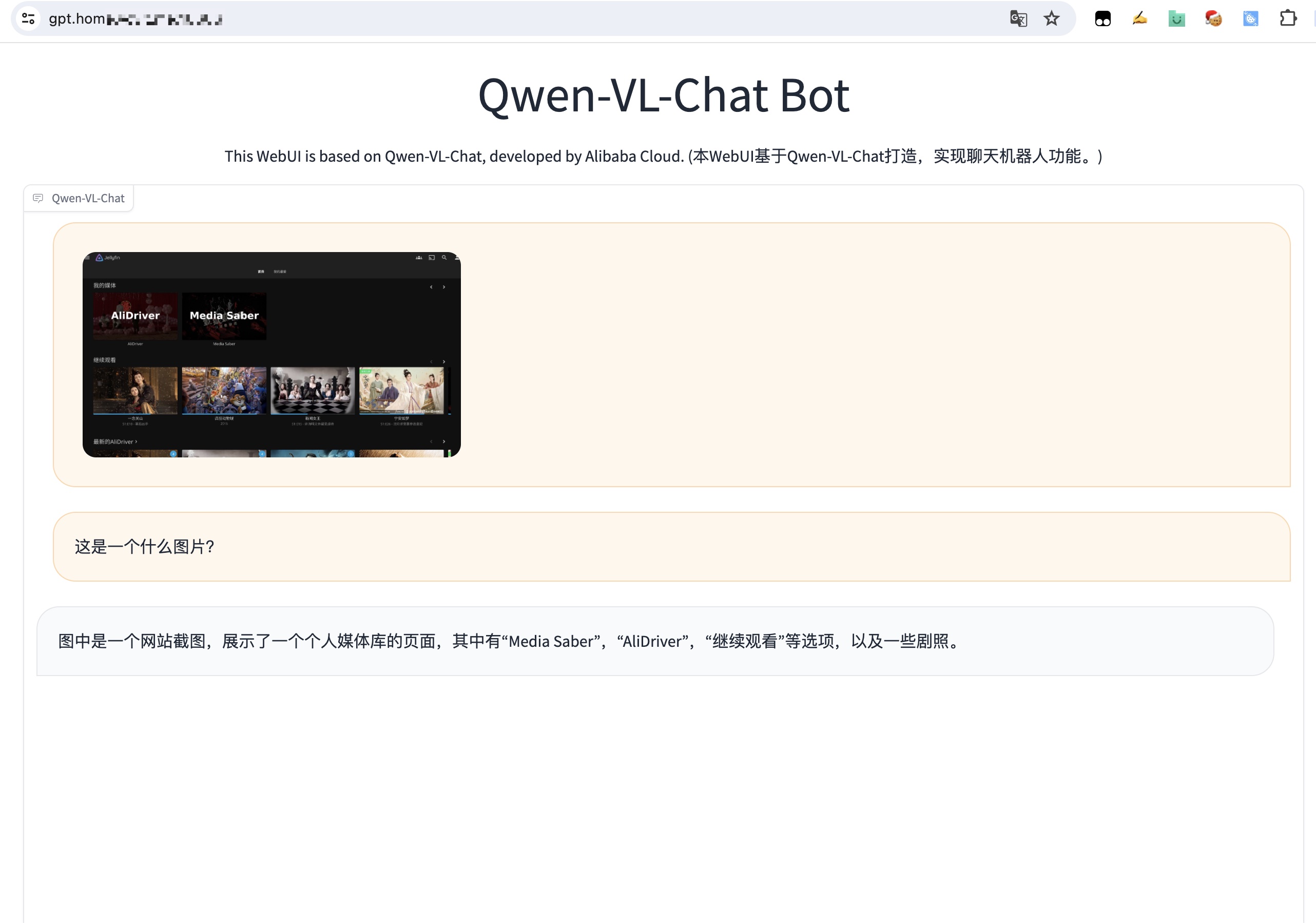

"""A simple web interactive chat demo based on gradio."""

from argparse import ArgumentParser

from pathlib import Path

import copy

import gradio as gr

import os

import re

import secrets

import tempfile

DEFAULT_CKPT_PATH = 'Qwen/Qwen-VL-Chat'

BOX_TAG_PATTERN = r"<box>([\s\S]*?)</box>"

PUNCTUATION = "!?。"#$%&'()*+,-/:;<=>@[\]^_`{|}~⦅⦆「」、、〃》「」『』【】〔〕〖〗〘〙〚〛〜〝〞〟〰〾〿–—‘’‛“”„‟…‧﹏."

import os

os.environ['CUDA_VISIBLE_DEVICES'] = '0'

from modelscope import (

snapshot_download, AutoModelForCausalLM, AutoTokenizer, GenerationConfig,

)

from transformers import BitsAndBytesConfig

import torch

model_id = 'qwen/Qwen-VL-Chat'

revision = 'v1.1.0'

model_dir = snapshot_download(model_id, revision=revision)

torch.manual_seed(1234)

def _get_args():

parser = ArgumentParser()

parser.add_argument("-c", "--checkpoint-path", type=str, default=DEFAULT_CKPT_PATH,

help="Checkpoint name or path, default to %(default)r")

parser.add_argument("--cpu-only", action="store_true", help="Run demo with CPU only")

parser.add_argument("--share", action="store_true", default=False,

help="Create a publicly shareable link for the interface.")

parser.add_argument("--inbrowser", action="store_true", default=False,

help="Automatically launch the interface in a new tab on the default browser.")

parser.add_argument("--server-port", type=int, default=8000,

help="Demo server port.")

parser.add_argument("--server-name", type=str, default="0.0.0.0",

help="Demo server name.")

args = parser.parse_args()

return args

def _load_model_tokenizer(args):

tokenizer = AutoTokenizer.from_pretrained(model_dir, trust_remote_code=True)

quantization_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_compute_dtype=torch.float16,

bnb_4bit_quant_type='nf4',

bnb_4bit_use_double_quant=True,

llm_int8_skip_modules=['lm_head', 'attn_pool.attn'])

model = AutoModelForCausalLM.from_pretrained(model_dir, device_map="auto",

trust_remote_code=True, offload_folder="offload_folder",fp16=True,

quantization_config=quantization_config).eval()

model.generation_config = GenerationConfig.from_pretrained(model_dir, trust_remote_code=True)

return model, tokenizer

def _parse_text(text):

lines = text.split("\n")

lines = [line for line in lines if line != ""]

count = 0

for i, line in enumerate(lines):

if "```" in line:

count += 1

items = line.split("`")

if count % 2 == 1:

lines[i] = f'<pre><code class="language-{items[-1]}">'

else:

lines[i] = f"<br></code></pre>"

else:

if i > 0:

if count % 2 == 1:

line = line.replace("`", r"\`")

line = line.replace("<", "<")

line = line.replace(">", ">")

line = line.replace(" ", " ")

line = line.replace("*", "*")

line = line.replace("_", "_")

line = line.replace("-", "-")

line = line.replace(".", ".")

line = line.replace("!", "!")

line = line.replace("(", "(")

line = line.replace(")", ")")

line = line.replace("$", "$")

lines[i] = "<br>" + line

text = "".join(lines)

return text

def _launch_demo(args, model, tokenizer):

uploaded_file_dir = os.environ.get("GRADIO_TEMP_DIR") or str(

Path(tempfile.gettempdir()) / "gradio"

)

def predict(_chatbot, task_history):

chat_query = _chatbot[-1][0]

query = task_history[-1][0]

print("User: " + _parse_text(query))

history_cp = copy.deepcopy(task_history)

full_response = ""

history_filter = []

pic_idx = 1

pre = ""

for i, (q, a) in enumerate(history_cp):

if isinstance(q, (tuple, list)):

q = f'Picture {pic_idx}: <img>{q[0]}</img>'

pre += q + '\n'

pic_idx += 1

else:

pre += q

history_filter.append((pre, a))

pre = ""

history, message = history_filter[:-1], history_filter[-1][0]

response, history = model.chat(tokenizer, message, history=history)

image = tokenizer.draw_bbox_on_latest_picture(response, history)

if image is not None:

temp_dir = secrets.token_hex(20)

temp_dir = Path(uploaded_file_dir) / temp_dir

temp_dir.mkdir(exist_ok=True, parents=True)

name = f"tmp{secrets.token_hex(5)}.jpg"

filename = temp_dir / name

image.save(str(filename))

_chatbot[-1] = (_parse_text(chat_query), (str(filename),))

chat_response = response.replace("<ref>", "")

chat_response = chat_response.replace(r"</ref>", "")

chat_response = re.sub(BOX_TAG_PATTERN, "", chat_response)

if chat_response != "":

_chatbot.append((None, chat_response))

else:

_chatbot[-1] = (_parse_text(chat_query), response)

full_response = _parse_text(response)

task_history[-1] = (query, full_response)

print("Qwen-VL-Chat: " + _parse_text(full_response))

return _chatbot

def regenerate(_chatbot, task_history):

if not task_history:

return _chatbot

item = task_history[-1]

if item[1] is None:

return _chatbot

task_history[-1] = (item[0], None)

chatbot_item = _chatbot.pop(-1)

if chatbot_item[0] is None:

_chatbot[-1] = (_chatbot[-1][0], None)

else:

_chatbot.append((chatbot_item[0], None))

return predict(_chatbot, task_history)

def add_text(history, task_history, text):

task_text = text

if len(text) >= 2 and text[-1] in PUNCTUATION and text[-2] not in PUNCTUATION:

task_text = text[:-1]

history = history + [(_parse_text(text), None)]

task_history = task_history + [(task_text, None)]

return history, task_history, ""

def add_file(history, task_history, file):

history = history + [((file.name,), None)]

task_history = task_history + [((file.name,), None)]

return history, task_history

def reset_user_input():

return gr.update(value="")

def reset_state(task_history):

task_history.clear()

return []

with gr.Blocks() as demo:

gr.Markdown("""<center><font size=8>Qwen-VL-Chat Bot</center>""")

gr.Markdown(

"""\

<center><font size=3>This WebUI is based on Qwen-VL-Chat, developed by Alibaba Cloud. \

(本WebUI基于Qwen-VL-Chat打造,实现聊天机器人功能。)</center>""")

chatbot = gr.Chatbot(label='Qwen-VL-Chat', elem_classes="control-height", height=750)

query = gr.Textbox(lines=2, label='Input')

task_history = gr.State([])

with gr.Row():

empty_bin = gr.Button("🧹 Clear History (清除历史)")

submit_btn = gr.Button("🚀 Submit (发送)")

regen_btn = gr.Button("🤔️ Regenerate (重试)")

addfile_btn = gr.UploadButton("📁 Upload (上传文件)", file_types=["image"])

submit_btn.click(add_text, [chatbot, task_history, query], [chatbot, task_history]).then(

predict, [chatbot, task_history], [chatbot], show_progress=True

)

submit_btn.click(reset_user_input, [], [query])

empty_bin.click(reset_state, [task_history], [chatbot], show_progress=True)

regen_btn.click(regenerate, [chatbot, task_history], [chatbot], show_progress=True)

addfile_btn.upload(add_file, [chatbot, task_history, addfile_btn], [chatbot, task_history], show_progress=True)

gr.Markdown("""\

<font size=2>Note: This demo is governed by the original license of Qwen-VL. \

We strongly advise users not to knowingly generate or allow others to knowingly generate harmful content, \

including hate speech, violence, pornography, deception, etc. \

(注:本演示受Qwen-VL的许可协议限制。我们强烈建议,用户不应传播及不应允许他人传播以下内容,\

包括但不限于仇恨言论、暴力、色情、欺诈相关的有害信息。)""")

demo.queue().launch(

share=args.share,

inbrowser=args.inbrowser,

server_port=args.server_port,

server_name=args.server_name,

)

def main():

args = _get_args()

model, tokenizer = _load_model_tokenizer(args)

_launch_demo(args, model, tokenizer)

if __name__ == '__main__':

main()

|